Modern security operations face a growing architectural challenge: collect telemetry from everywhere, process it in real time, and route it to multiple platforms while maintaining data sovereignty, avoiding agent sprawl, and keeping costs under control.

Single-model collection strategies force security teams to make compromises. Agent-only models create operational overhead and maintenance risk. Agentless-only approaches simplify operations but limit depth and flexibility. Cloud-processed pipelines introduce governance and compliance friction.

VirtualMetric DataStream addresses these constraints through a hybrid security data collection architecture designed to scale across modern SOC environments without tradeoffs.

A hybrid approach to security telemetry collection

VirtualMetric DataStream is built on a simple principle: use agentless collection wherever possible, and deploy agents only where they provide clear additional value.

Rather than enforcing a single collection model, the architecture supports both agentless and agent-based methods. It allows organizations to choose based on operational requirements, security constraints, and coverage needs. This hybrid approach enables broad telemetry coverage without unnecessary software sprawl, while preserving deep visibility where it is really required.

Agentless-first collection using native protocols

At the core of the architecture is VirtualMetric’s agentless collection capability. While other platforms require deploying agents everywhere, VirtualMetric collects telemetry from Windows, Linux, macOS, Solaris, and AIX systems without installing any software on target machines.

Secure, read-only access is established using native operating system protocols. For Windows systems, it leverages Windows Remote Management (WinRM) to collect Event Logs, performance counters, and system metrics. For Unix-based systems, it utilizes SSH connections to gather syslog data, audit logs, and system information.

Because these mechanisms are already present in enterprise environments, onboarding is fast and operational overhead is minimal. Agentless collection integrates with enterprise identity infrastructure through credential stores and Active Directory service accounts, eliminating hardcoded credentials while maintaining secure access.

This approach is particularly effective in tightly controlled or compliance-sensitive environments where agent installation is restricted.

Agents where deeper visibility or resilience is required

While agentless collection covers the majority of enterprise telemetry needs, VirtualMetric does not treat it as universally sufficient. Agents are available, but optional.

The VirtualMetric Agent is deployed selectively on systems requiring deep process-level telemetry, operating in isolated or unreliable networks, or benefiting from local buffering and processing. Agents are lightweight, support crash recovery, and can execute full DataStream pipelines locally when needed.

While other solutions process everything centrally (requiring massive cloud infrastructure and bandwidth), VirtualMetric can push filtering, transformation, masking, and aggregation to the edge when beneficial. This reduces bandwidth consumption and distributes processing load without reintroducing centralized bottlenecks.

Hybrid security data collection in practice

In a typical enterprise deployment, agentless collection is used for the majority of systems, often covering most Windows and Linux servers. Agents are introduced only in high-security zones, isolated environments, or on systems where deeper behavioral telemetry is required.

Network devices and infrastructure components are collected through standard protocols such as Syslog, NetFlow, and SNMP. At the same time, cloud services and SaaS platforms are integrated via APIs and native cloud services. This model avoids all-or-nothing collection decisions and allows SOC teams to balance coverage, depth, and operational efficiency deliberately.

VirtualMetric’s core architectural differentiators

Security-first design through data plane and control plane separation

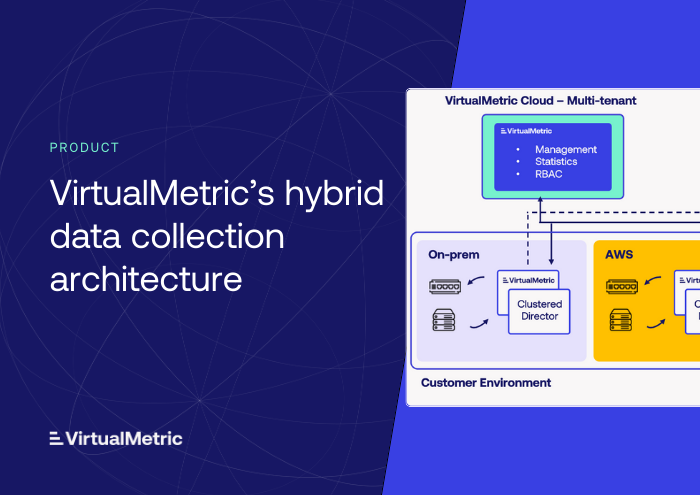

VirtualMetric DataStream enforces a strict separation between data plane and control plane operations. This separation is a foundational architectural choice driven by enterprise security, compliance, and data sovereignty requirements.

The control plane, delivered through VirtualMetric Cloud, provides centralized pipeline configuration, fleet management across Directors and Agents, real-time monitoring, and role-based access control. Crucially, it does not process, inspect, or store customer telemetry.

The data plane operates entirely within customer-controlled infrastructure. VirtualMetric Directors ingest, process, transform, and route telemetry locally. VirtualMetric Agents, where deployed, communicate only with local Directors. At no point is raw customer log data transmitted to VirtualMetric Cloud or any external service.

This design ensures sensitive security telemetry remains under full customer control, supports regulatory requirements such as GDPR, HIPAA, and SOX, and minimizes network exposure by requiring only a single outbound HTTPS connection for management.

Identity-based authentication and secure multi-tenant operation

VirtualMetric DataStream eliminates traditional credential management overhead through native support for identity-based authentication.

In Azure environments, the platform integrates with Azure Managed Identity for credential-free authentication and automatic token rotation. In AWS environments, it leverages IAM roles and temporary session credentials.

For MSSP and multi-tenant deployments, the VirtualMetric Director Proxy enables each customer to retain full control over credentials and identities while allowing centralized operation. This preserves strict tenant isolation and data sovereignty without exposing customer infrastructure.

One hub for all your telemetry sources

VirtualMetric’s collection architecture supports the widest range of data sources in the industry without custom development or third-party connectors.

Supported network protocols include Syslog, NetFlow/IPFix, sFlow, SNMP Traps, and TCP/UDP listeners. Streaming platforms such as Kafka, RabbitMQ, Redis Pub/Sub, and NATS are supported natively. Security-specific integrations include Cisco eStreamer and vendor APIs. Cloud services are integrated through platforms such as Azure Monitor, Event Hubs, Blob Storage, AWS CloudTrail, and CloudWatch. Application-level ingestion is supported via HTTP/HTTPS, SMTP, and TFTP.

This breadth allows organizations to consolidate telemetry collection into a single processing architecture, reducing the need for parallel collectors or bespoke integrations.

Reliability through write-ahead logging and state-aware processing

VirtualMetric Directors implement a write-ahead log (WAL) architecture to ensure durability and recovery. Every incoming event is persisted to disk before processing begins.

In the event of failure or restart, processing resumes from the last committed checkpoint without manual intervention. Log duplication is capped at a maximum of one message, providing zero data loss guarantees without requiring external systems such as Kafka.

High-throughput processing through vectorized execution

The DataStream pipeline engine is fully vectorized, processing telemetry in optimized chunks across available CPU cores. This enables high throughput with low latency while delivering data to multiple destinations in parallel, including SIEMs, data lakes, and analytics platforms.

By combining vectorized execution with efficient data formats and compression, the architecture achieves 10x higher throughput than traditional JSON-based pipelines.

Multi-schema normalization and intelligent routing

VirtualMetric DataStream natively supports multiple industry-standard security schemas, such as ASIM for Microsoft Sentinel, OCSF for AWS Security Lake, ECS for Elasticsearch, CIM for Splunk Enterprise Security, and UDM for Google SecOps.

The pipeline engine automatically converts telemetry between schemas while preserving semantic integrity. A single deployment can simultaneously feed Sentinel with ASIM, Security Lake with OCSF, and Splunk with CIM, all from the same source events.

Intelligent routing delivers identical events to multiple platforms simultaneously, implementing cost-optimized data tiers with hot, warm, and cold storage strategies.

An architecture designed to remove tradeoffs

VirtualMetric DataStream combines agentless-first collection with optional agents, local data processing with centralized management, strong reliability guarantees, and flexible multi-destination routing.

Rather than forcing organizations to choose between security, performance, and operational simplicity, the architecture is designed to support all three simultaneously – at scale, under governance constraints, and without unnecessary compromise.

See VirtualMetric DataStream in action

Start your free trial to experience safer, smarter data routing with full visibility and control.