Last week, the cybersecurity industry received a signal it cannot afford to ignore.

Anthropic announced Claude Mythos Preview: a general-purpose frontier AI model that, without any explicit training for the task, autonomously discovered and fully exploited zero-day vulnerabilities across every major operating system and web browser. Not theoretical capabilities. A 17-year-old remote code execution flaw in FreeBSD’s NFS server, fully weaponized without a single human in the loop after an initial prompt. A 27-year-old crash bug in OpenBSD. A 16-year-old vulnerability in FFmpeg’s H.264 codec. Thousands more still under coordinated disclosure.

The performance gap versus its predecessor is staggering. Where Claude Opus 4.6 succeeded at autonomous exploit development roughly zero percent of the time, Mythos Preview succeeded 181 times in the same test. And this was not a security research tool. It was a general reasoning model whose coding capability got good enough that exploitation became a side effect.

Anthropic is not releasing it publicly. For now. But the direction of travel is clear: capabilities at this level will eventually become more broadly available. The question is not whether security teams will face AI-generated exploits at scale. It is when, and whether your infrastructure will be able to see them when they arrive.

The uncomfortable truth about breach detection

Before we talk about AI attackers, we need to talk about a problem that predates them.

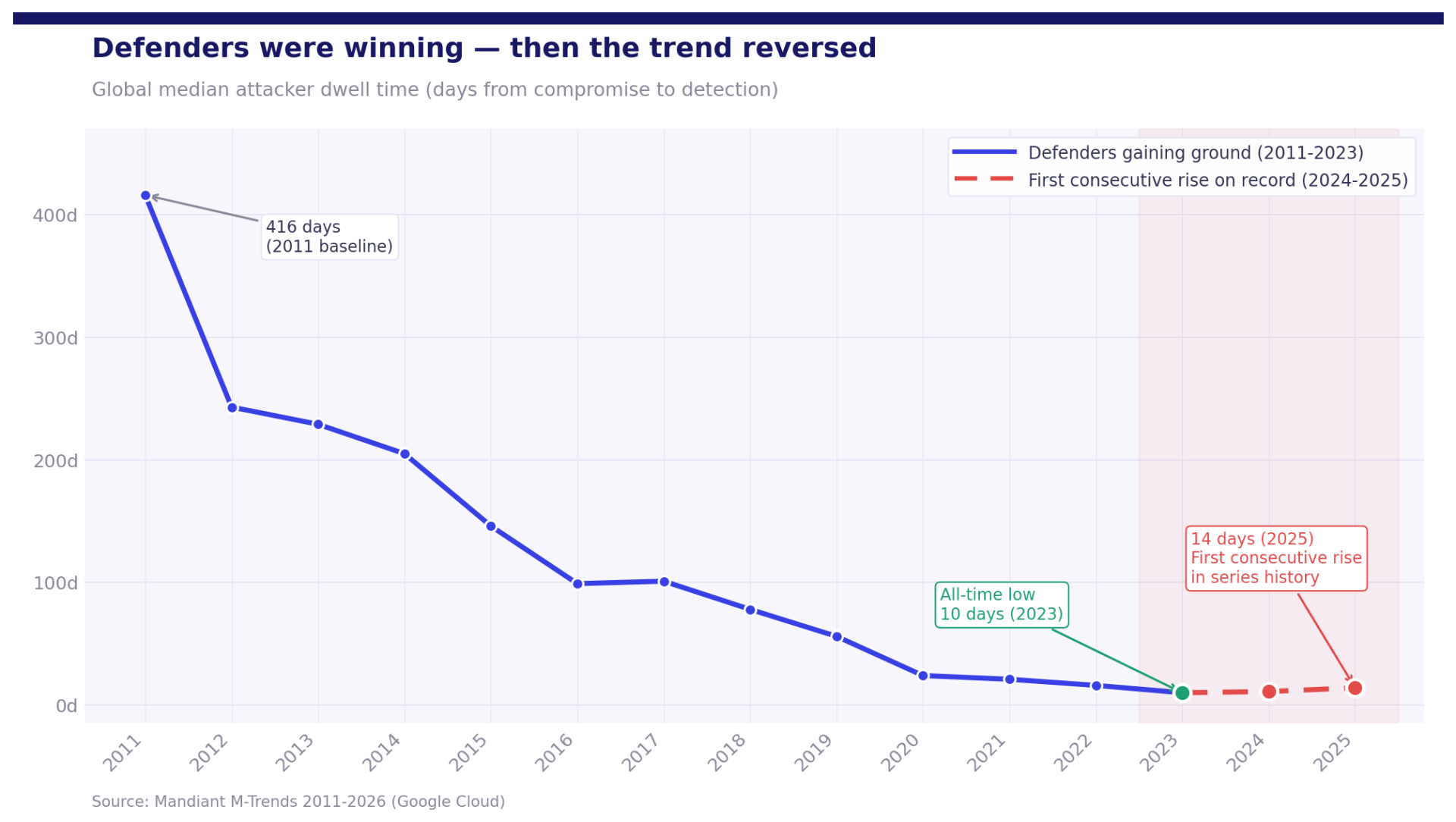

Mandiant has tracked global median dwell time, the number of days an attacker sits undetected in an environment, every year since 2011. The trend for a decade was genuinely encouraging: from 416 days in 2011, down to 205 days in 2014, down to 56 days in 2019, reaching an all-time low of 10 days in 2023. A decade of defenders genuinely closing the gap.

Then something changed. In 2024, median dwell time rose to 11 days. In 2025, it rose again to 14. According to Mandiant’s M-Trends 2026 report, this is the first consecutive year-on-year increase since the series began. Defenders had been winning for over a decade. Now, for the first time, they are losing ground.

That reversal started before Mythos. Before AI-generated exploits at scale. It is worth sitting with that for a moment.

Most breaches are not detected in the data organizations collect. They are detected in the data organizations didn’t collect, didn’t normalize, or couldn’t correlate in time. Incident responders see this pattern constantly. When they arrive at a breach, the evidence of the attacker’s initial foothold is almost never in the SIEM. It was never ingested, or it was collected in a format that made correlation impossible, or it was dropped because volume was too high and cost too great.

Data from incident response engagements points to something striking: 75% of identity attacks exploit logging gaps. Not misconfigured systems, not weak passwords. Blind spots in what was recorded in the first place.

Now think about what happens to that dwell time trend when attack volume and velocity accelerate. When exploit chains that used to take a skilled team weeks to develop can be generated autonomously in hours. The 10-day window defenders fought for a decade to reach does not hold in that world. Not without the right data infrastructure underneath it.

Directors and Clusters now also expose live Console Logs directly in the interface, giving real-time visibility into service-level operations and diagnostics from the same place you manage your configuration.

Five things that need to be true about your security data infrastructure

The Mythos moment is not primarily a detection engineering problem or a threat intelligence problem. It is a data infrastructure problem. Before any of the more sophisticated defensive capabilities can function, like AI-assisted triage, automated response, or autonomous SOC, certain things need to be true about how your telemetry is collected, processed, and stored.

Speed of collection must match speed of attack

Traditional logging architectures were designed for a world where attacks unfolded over days or weeks. That world is eroding. Mandiant’s red teams consistently report needing only five to seven days on average to achieve their objectives once inside a network. An AI-assisted attacker generating exploit variants autonomously compresses that further. When the attack moves that fast, detection depends on near-real-time telemetry. High-throughput, low-latency collection from every source, endpoints, network, cloud, identity, OT, is not a performance optimization. It is a prerequisite for detection being possible at all.

Visibility gaps are the attack surface

Security teams tend to think of their attack surface in terms of systems and configurations. But for a sophisticated attacker, and increasingly for an autonomous AI agent, the more interesting surface is the gaps in what you log. If a log source is not connected, if certain event types are deprioritized to manage SIEM costs, if coverage is inconsistent across hybrid and multi-cloud environments: those blind spots are where the attack will move. Not because attackers are deliberately targeting your logging gaps, but because that is where the evidence trail goes cold.

Mandiant’s 2025 report found that in 34% of 2024 intrusions, investigators could not determine an initial infection vector at all, attributing this directly to deficiencies in enterprise logging. Nearly one in three breaches, and the entry point was simply not recorded.

Normalized data is the prerequisite for AI-assisted defense

Every conversation about AI-native detection, autonomous SOC, and LLM-powered investigation rests on an assumption that often goes unstated: the underlying data is clean, consistent, and structured.

You cannot build reliable machine learning detection on top of inconsistent log formats. You cannot prompt an AI analyst on telemetry where the same field means different things across different vendors. Schema-first normalization at ingest, ensuring every event regardless of source arrives in a consistent format, is the infrastructure layer that determines whether AI-assisted defense actually delivers or stays a marketing concept.

This matters more as the pace of new vulnerability classes accelerates. If Mythos-class models start generating novel attack patterns, detection models need to identify behavioral anomalies across normalized, comparable telemetry. Raw, inconsistent log data does not support that.

Your pipeline must update itself

When new vulnerability classes emerge, vendors update their log schemas. New event types appear. Existing fields change meaning. Parsers that worked last month silently fail this month.

This is the hidden liability of open-source pipeline tooling. Logstash is powerful, but it requires manual maintenance, expert configuration, and significant engineering investment every time the landscape shifts. In a world where the pace of change is accelerating, that maintenance burden compounds. A pipeline that requires a trained engineer to rebuild parsers under incident conditions is not just inconvenient. It is a gap in your defensive capability.

The question every security team should be asking: when a new attack technique emerges and vendors update their telemetry schemas overnight, how long before your pipeline reflects that? If the answer involves tickets, sprints, or engineering prioritization conversations, that is a risk worth examining.

Cost control cannot come at the expense of coverage

Rising log volumes create pressure to reduce SIEM ingestion costs. The tempting response is to cut what you collect. It is also the dangerous one. Incident responders universally report that the logs most critical to understanding a breach are precisely the ones that got deprioritized. Intelligent routing and tiered storage, sending high-fidelity data to your primary SIEM while routing lower-signal telemetry to cold storage with full auditability, is how mature organizations manage this tension. Blanket cuts that create blind spots are not cost control. They are deferred incident response costs.

Questions worth asking about your roadmap

The Claude Mythos announcement is not an isolated event. It is a data point on a trajectory the industry has been watching for two years: AI capabilities improving at a pace that is compressing the attacker-defender cycle.

But the Mandiant dwell time data tells us something important: the problem was already getting harder before Mythos arrived. The first consecutive rise in dwell time in over a decade did not happen because detection engineering got worse. It happened because the attack surface is growing faster than coverage is keeping up.

The best long-term strategy for defenders is to move away from static, siloed tools and toward dynamic security workflows. But that shift only delivers on its promise if the foundational layer is solid. Before investing in the next generation of AI-assisted detection, it is worth asking some honest questions about your current data infrastructure:

- Do you have complete, consistent log coverage across all environments: cloud, on-premise, identity, endpoints, OT?

- Is your telemetry normalized at ingest, or do your detection rules need to account for dozens of vendor-specific formats?

- How long does it take your pipeline to adapt when a vendor changes their log schema?

- Are your cost-reduction decisions creating visibility gaps you have not fully mapped?

- Can your collection and processing keep pace with near-real-time attack timelines?

If any of those questions surface gaps, now is the time to address them. Not after the attack tempo increases.

The organizations that will navigate this moment well are not necessarily those with the most sophisticated detection logic. They are the ones whose data infrastructure is fast, complete, and maintainable enough to support whatever detection capabilities they deploy on top of it.

The infrastructure investment needs to happen before the wave.

At VirtualMetric, we built DataStream to address exactly these infrastructure requirements – high-throughput collection, schema-first normalization, intelligent routing, and automated pipeline maintenance – for SOC teams and MSSPs managing security data at scale. If you’re evaluating your current pipeline against the questions above, we’d be glad to talk.

See VirtualMetric DataStream in action

Start your free trial to experience safer, smarter data routing with full visibility and control.