A practical architectural guide for security leaders

Security leaders are facing sustained pressure to reduce SIEM costs while maintaining detection coverage and compliance readiness. In many environments, telemetry volumes continue to grow even when the actual risk profile remains stable.

The root cause is structural. Modern environments generate logs across endpoints, identity systems, firewalls, SaaS platforms, APIs, containers, and cloud workloads. SIEM platforms are increasingly pricing based on ingestion volume. As data volume grows, cost grows proportionally.

Most organizations respond tactically. They tune alerts, shorten retention, or apply table transformations inside the SIEM to improve usability, but these actions rarely reduce the ingestion bill.

Others control costs more directly by limiting what they send at all. They cap daily ingest, delay onboarding new data sources, remove high-volume feeds or privileged access telemetry, or rely on Logstash to shrink volume across multiple distributed installations that require specialist skills to maintain. In some cases, SIEM is deliberately held at a fixed daily ceiling, even when additional telemetry would improve visibility, because the budget cannot absorb further growth.

These measures stabilize spending in the short term, but they do not address the economic mechanism that drives it.

What actually drives SIEM spend

In ingestion-based pricing models, cost is primarily determined at the moment data crosses the SIEM boundary. Once indexed, that volume is billable regardless of how much of the event supports detection.

SIEM cost is generally shaped by:

- Daily ingestion volume

- Retention tier and duration

- Storage class

- Query and analytics compute

- Replication and backup requirements

Among these, ingestion volume is usually the dominant factor.

Filtering or transforming data inside the SIEM improves usability, but it does not reverse ingestion charges.

For organizations operating at scale, the difference between post-ingestion refinement and pre-ingestion control becomes significant.

The proven levers for reducing SIEM cost

There are several ways to reduce SIEM cost. Not all of them are equally safe or sustainable:

1. Reducing data volume before ingestion. If ingestion is billable, reducing payload size upstream has a direct economic impact.

2. Tiering storage. Detection-critical data belongs in high-performance analytics tiers. Bulk telemetry, compliance archives, and verbose logs often do not.

3. Schema discipline. Duplicate fields, verbose extensions, and redundant metadata inflate indexed size without improving detection quality.

4. Alignment between ingestion and detection use cases. Many environments ingest more telemetry than active detection logic consumes.

5. Transport efficiency and compression. This affects network and storage costs but does not solve ingestion billing alone.

Many organizations focus on levers four and five. Meaningful cost reduction typically requires addressing the first three.

Why SIEM-side optimization has limits

SIEM platforms often provide tools such as data collection rules, table transformations, and workbooks for cost monitoring. These capabilities are valuable and help identify top billable tables, detect ingestion anomalies, and tune specific event types.

However, in ingestion-priced models, billing occurs when data enters the workspace. Transformations applied afterward improve signal quality but do not change ingestion economics.

Monitoring ingestion anomalies inside the SIEM is useful. Controlling ingestion before it becomes billable is more effective.

The architectural principle: control cost before ingestion

If ingestion volume drives cost, then cost control must happen upstream of the SIEM.

This principle has led to increased attention around the concept of a security data pipeline platform. Gartner, Forrester, SACR, and other analysts have described a growing separation between data collection, data processing, and analytics. In this model, telemetry is collected and prepared outside the analytics platform. Only the data that needs to be indexed for active detection is sent into the SIEM. The remainder can be retained in lower-cost storage tiers.

A security data pipeline typically performs several functions:

- Data collection from a large number of data sources.

- Normalization into a consistent schema.

- Enrichment to preserve the context required for detection.

- Reduction of redundant or low-value fields and events.

- Routing of events to appropriate storage tiers based on operational need.

This separation allows organizations to align cost with security value.

What safe SIEM cost reduction requires

Reducing ingestion volume without damaging detection capability requires careful controls.

First, fields required by parsers, analytics rules, and investigation workflows must be preserved. Removing a field used in correlation logic creates operational risk.

Second, optimization behavior must be deterministic and auditable. In regulated industries, security teams must be able to explain how data is processed and what is removed. Black-box suppression techniques create uncertainty.

Third, organizations must retain the ability to access full-fidelity logs when necessary. Compliance audits, forensic investigations, and incident response often require original event records. Storing full logs in lower-cost tiers while indexing optimized subsets in the SIEM is a common pattern.

These constraints define the difference between cost-cutting and controlled cost engineering.

How this works in practice

To illustrate the architectural difference, consider a real scenario using FortiGate firewall logs in Microsoft Sentinel.

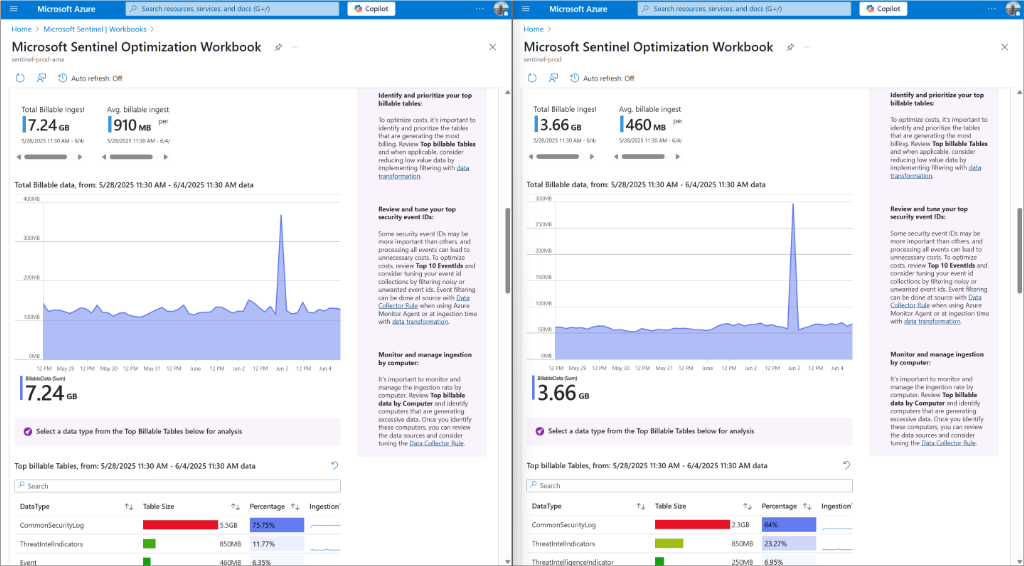

In a baseline configuration, FortiGate logs in CEF format were sent through Azure Monitor Agent directly into Microsoft Sentinel. The Microsoft Sentinel Optimization Workbook showed a total billable ingestion of 7.24 GB during the selected period. The CommonSecurityLog table accounted for 5.5 GB, representing the majority of the cost.

When the same logs were processed through a pre-ingestion pipeline before entering Sentinel, total billable ingestion dropped to 3.66 GB for the same time range. The CommonSecurityLog table decreased to 2.36 GB.

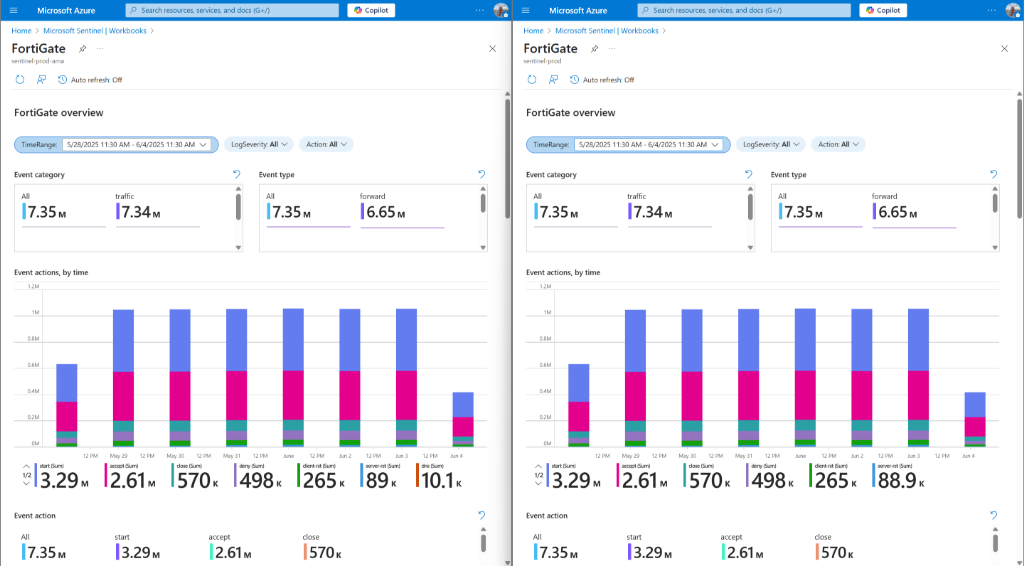

The FortiGate operational workbook confirmed that event categories, event types, and action distributions remained consistent. Detection logic and dashboards continued to function as expected. Instead of indexing verbose extension fields and redundant attributes, the pipeline preserved only the fields required for detection and investigation.

Screenshots from the Microsoft Sentinel Optimization Workbook clearly show the reduction in total billable ingestion between the direct ingestion model and the pre-ingestion pipeline model.

This example demonstrates the architectural principle under controlled conditions with identical sources and time ranges.

Implementing the pipeline model in practice: VirtualMetric DataStream

One way to implement upstream control is through a security data pipeline platform such as VirtualMetric DataStream.

DataStream processes telemetry before it crosses the SIEM ingestion boundary. It performs normalization, deterministic field-level reduction, enrichment, and routing upstream of analytics platforms. Detection-relevant fields are preserved. Redundant or low-value extensions are removed based on rule-based logic rather than probabilistic suppression.

Optimized events are forwarded to the SIEM for indexing. Full-fidelity logs can be retained in cost-efficient tiers when required for compliance or forensic retrieval.

In environments operating multiple analytics platforms, optimization logic is centralized and applied consistently across destinations. This reduces governance complexity and prevents drift between pipelines.

The FortiGate example above was implemented using this model.

When a security data pipeline approach makes sense

This approach is most relevant in environments where:

- SIEM licensing is driven primarily by ingestion volume.

- High-volume log sources, such as firewalls or network devices, dominate cost.

- Ingestion growth outpaces growth in detection use cases.

- Budget pressure exists without tolerance for reduced visibility

For smaller environments with modest ingestion levels, native SIEM tuning may be sufficient. For enterprises ingesting millions of daily events, upstream cost control becomes a strategic lever rather than a tactical adjustment.

Aligning cost with security value

Analyst commentary increasingly reflects a broader trend: organizations are moving from collecting maximum telemetry toward engineering data flows that serve defined detection and response objectives.

Organizations that adopt this architectural separation commonly observe measurable reductions in ingestion volume along with improved signal quality and clearer operational focus.

To understand how this model can be implemented in practice, including deterministic field-level optimization and controlled ingestion reduction, see: SIEM Cost Reduction with VirtualMetric DataStream.

See VirtualMetric DataStream in action

Start your free trial to experience safer, smarter data routing with full visibility and control.