Security teams are increasingly turning to Amazon Security Lake to consolidate security telemetry across cloud, network, and on-prem environments. Security Lake provides a unified, OCSF-based data repository that powers analytics, threat hunting, and machine learning across AWS services and third-party tools.

But to take advantage of Security Lake’s capabilities, organizations must deliver clean, normalized, OCSF-compliant data, and this is where challenges arise.

Most environments generate logs in dozens of different formats: native syslog, CEF, LEEF, Windows Security Events, firewall events, DNS logs, and more. Without a proper pipeline, teams struggle with normalization, schema alignment, and consistent routing into the correct Security Lake buckets and OCSF classes.

VirtualMetric DataStream now integrates directly with Amazon Security Lake, enabling organizations to send fully normalized, OCSF-aligned security telemetry to AWS without building their own transformation pipelines.

This integration bridges the complexity between diverse security data sources and Security Lake’s OCSF requirements, giving SOC teams cleaner data, faster analytics, and a unified security view inside AWS.

The challenges teams face with Amazon Security Lake today

Amazon Security Lake is built around standardization, specifically OCSF and Parquet. That design is what makes large-scale analytics possible, but it also exposes the realities of modern telemetry:

- The data is heterogeneous by default. Each vendor produces logs differently (Fortinet ≠ Palo Alto ≠ Check Point). Even within the same category of tool, field names, event semantics, and required enrichment vary.

- Windows security telemetry is not “one format.” Authentication, process creation, audit policy, firewall, and DNS events typically require multi-stage parsing and normalization to remain consistent and usable downstream.

- Schema mapping errors become ingestion and analytics failures. When event classes are misidentified or fields land in the wrong schema, downstream detections, queries, and integrations break or never work correctly in the first place.

- Operational load increases as volume increases. High-volume logs demand stable pipelines that can batch, compress, retry, and route consistently, without manual babysitting.

The net effect is predictable: Security Lake adoption becomes a data engineering project when most SOC teams need it to be an operational capability.

Introducing the DataStream + Amazon Security Lake integration

VirtualMetric DataStream’s Amazon Security Lake integration is designed to remove the “build and maintain your own pipeline” burden.

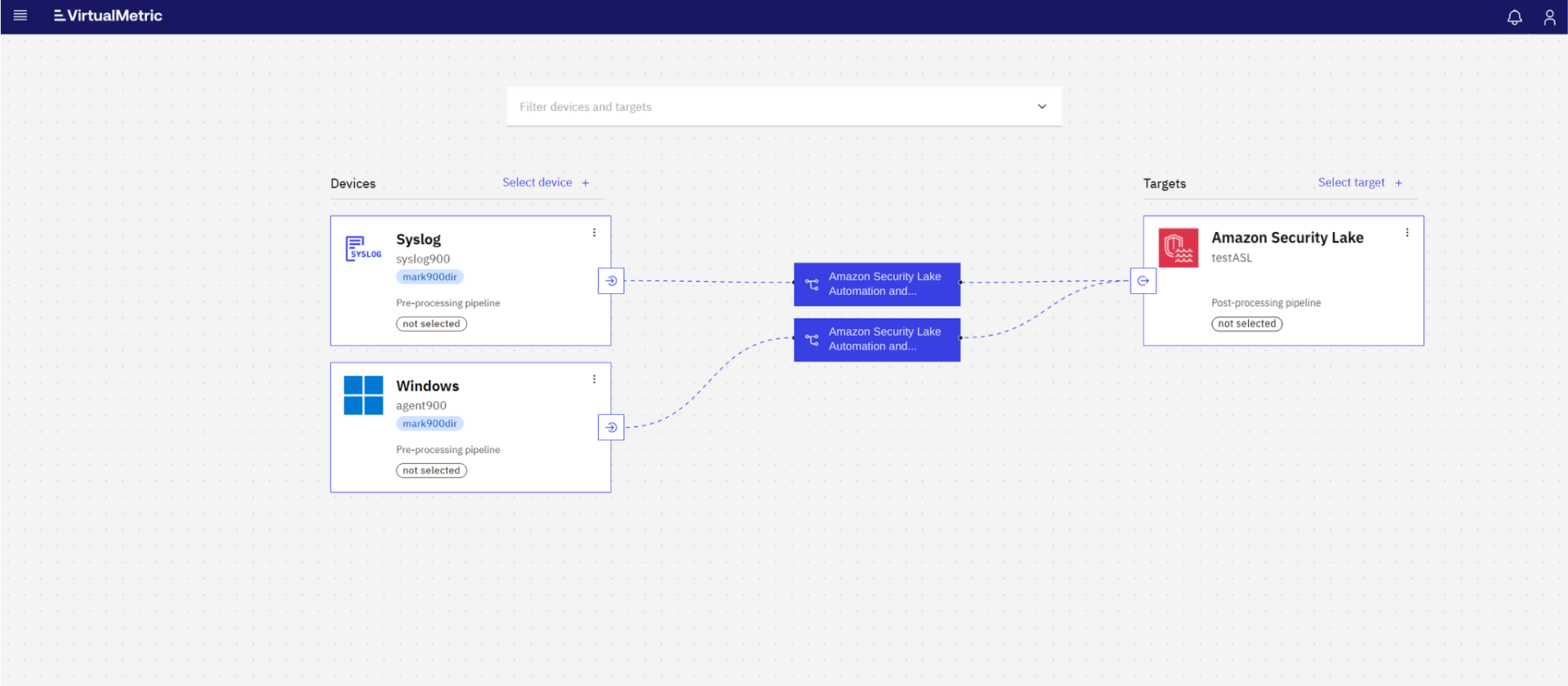

DataStream sits between your sources and Amazon Security Lake and automates what teams typically have to implement with scripts, glue code, or fragile transformations:

- detect log formats and vendors

- normalize data into CommonSecurityLog (CSL), ECS, or ASIM

- convert all events into OCSF-compliant Parquet

- route logs to the correct Security Lake buckets

- maintain clean, structured, analytics-ready telemetry

Instead of shaping your environment around Amazon Security Lake’s ingestion requirements, you standardize once in DataStream and deliver OCSF-ready telemetry into AWS by design.

What security teams gain

This integration is not just about “getting logs into the lake.” It’s about making Amazon Security Lake immediately usable, at scale, across diverse telemetry sources.

1. Simplified onboarding and intelligent routing

DataStream automatically detects log format (syslog, CEF, LEEF, Windows events, and common firewall vendor patterns) and routes each event into the appropriate destination based on the OCSF schema and bucket mapping you define.

2. OCSF-compliant normalization across sources

DataStream performs multi-stage normalization:

- CEF/LEEF → CommonSecurityLog

- ECS → ASIM

- ASIM → OCSF

So events arrive aligned to OCSF requirements without teams writing custom transformation rules.

3. Vendor-aware pipelines that preserve security context

Rather than flattening everything into generic fields, DataStream applies vendor-specific logic for major firewall and network platforms, keeping critical context while producing clean OCSF-ready telemetry.

4. Windows security and firewall event normalization that holds up in production

Key Windows security events (authentication, process creation, privilege assignment, audit and policy signals), Windows Firewall traffic, and DNS events can flow through structured pipelines (ECS→ASIM→OCSF) to maintain semantic correctness and compatibility.

5. High performance and reliability for high-volume telemetry

DataStream manages batching, multipart uploads, compression, retry logic, and pipeline isolation to support consistent ingestion even as volume and vendor diversity increase.

6. Ingest once, deliver anywhere

Amazon Security Lake becomes one destination in a broader routing strategy. The same processed telemetry can also be delivered to Elastic, Splunk, Microsoft Sentinel, S3, or other storage and analytics platforms without duplicating your collection and transformation effort.

How the integration works

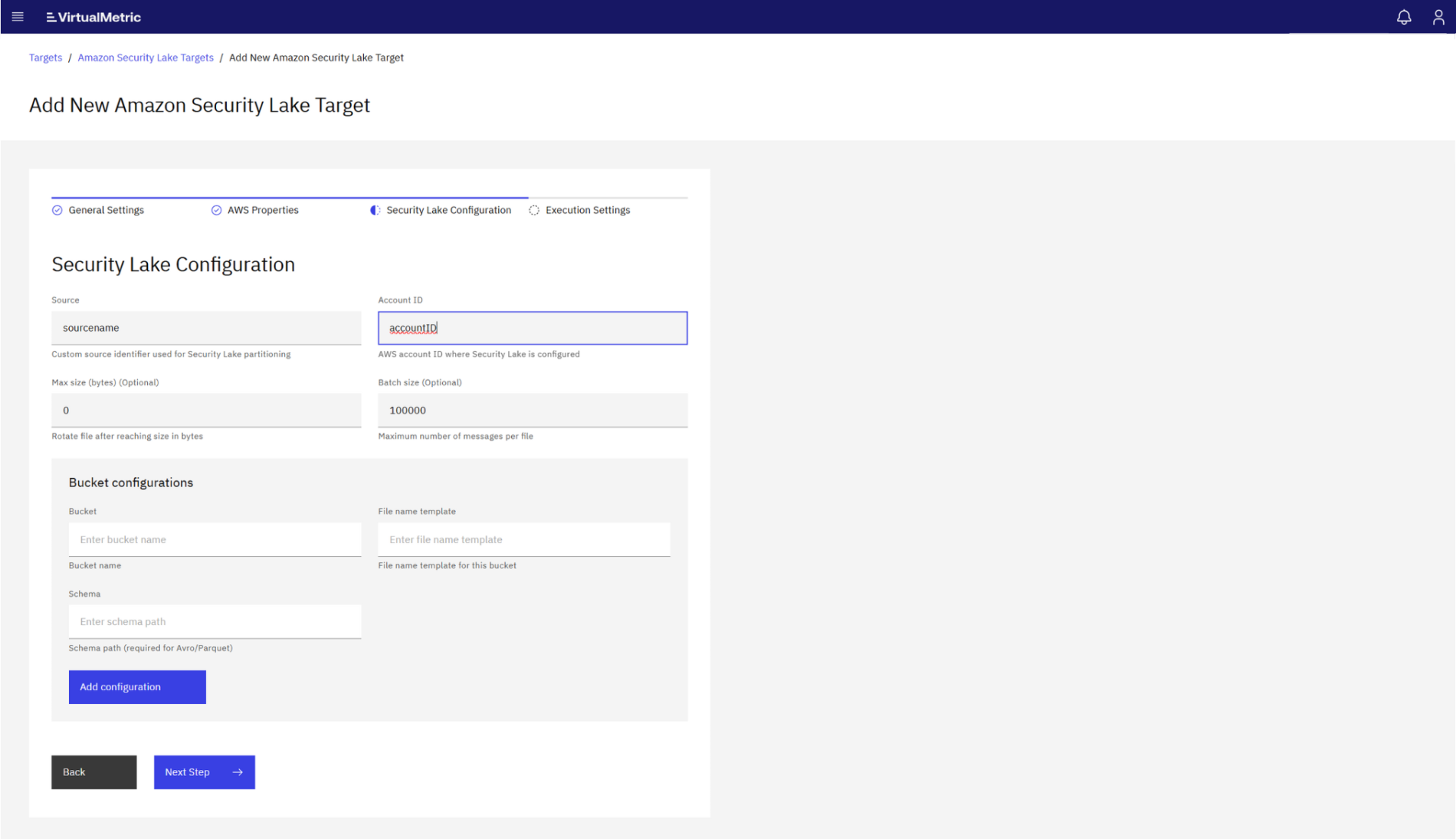

DataStream uses a dedicated awssecuritylake target to:

- Generate OCSF-compliant Parquet files

- Assign the correct OCSF schema for each event type

- Upload files to the proper Security Lake partition structure

- Authenticate via AWS credentials or IAM roles

Logs flow through the aws_lake processing pipeline, where they pass through:

- Format detection

- Vendor-specific normalization

- Multi-stage schema transformation

- OCSF mapping

- Parquet generation

- Upload into Security Lake bucket structure

Once ingested, Security Lake automatically exposes the data to AWS analytics tools including Athena, OpenSearch, SageMaker, and third-party SIEM integrations.

Getting started

Getting started is straightforward:

- Configure your Amazon Security Lake target in VirtualMetric DataStream.

2. Map your Amazon Security Lake buckets to their OCSF schema identifiers.

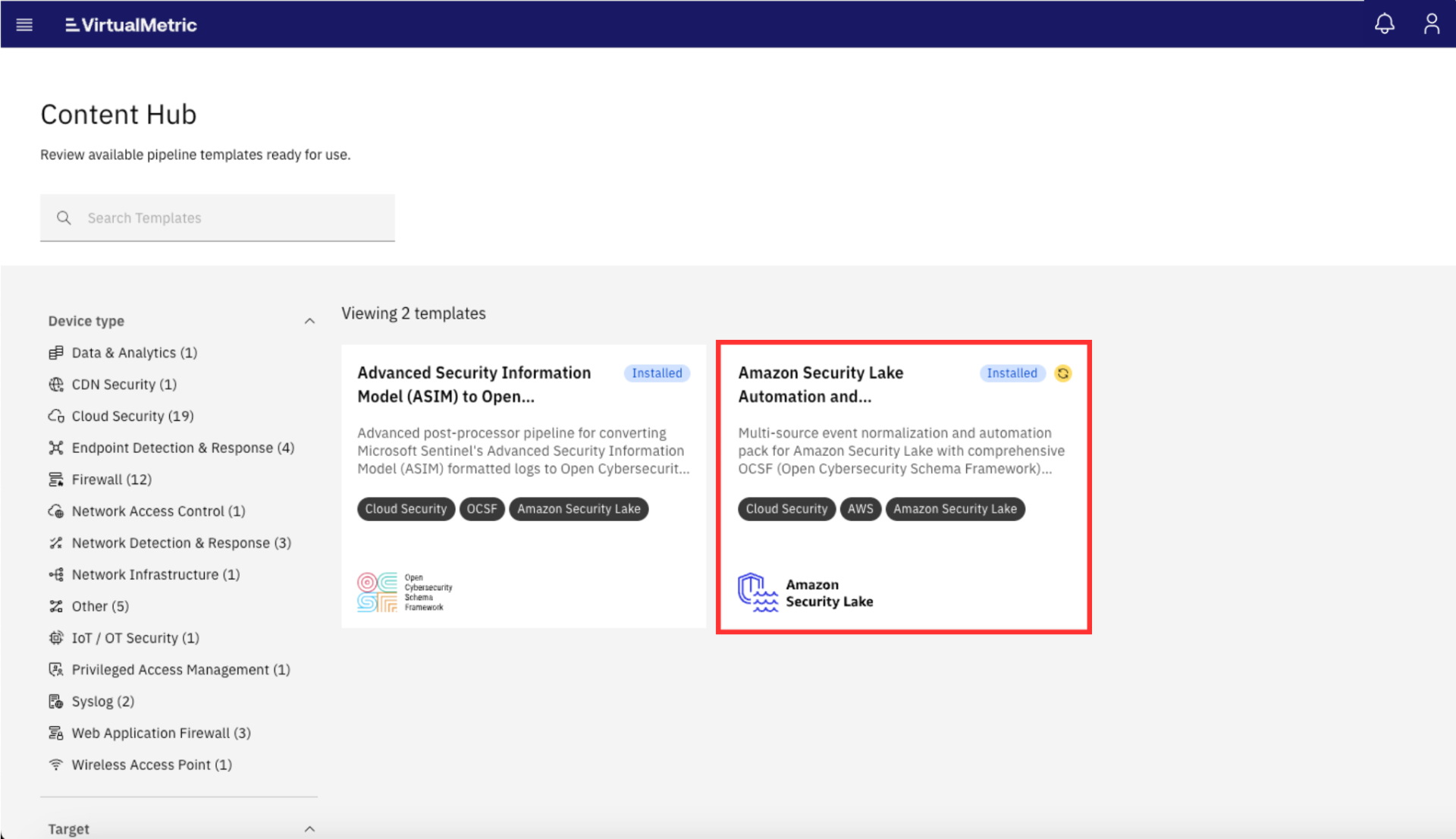

3. Enable Amazon Security Lake Automation and Normalization Pack for automatic normalization.

4. Begin streaming logs from your environment: Windows, Linux, firewalls, cloud services, and network devices.

No manual schema work. No custom transformations. No hand-built OCSF formatting.

A better way to power your Amazon Security Lake

Amazon Security Lake is a powerful foundation for security analytics in AWS, but its value depends on the quality and consistency of the telemetry you deliver into it.

With VirtualMetric DataStream, you can operationalize Amazon Security Lake faster by turning multi-vendor, multi-format security logs into OCSF-aligned Parquet automatically, then routing them into the right place, in the right structure, at scale.

If your goal is to make Amazon Security Lake usable for real SOC work (not just storage), DataStream provides a better, more reliable path from raw telemetry to analytics-ready security data.

Want to explore how DataStream can be integrated into your AWS environment? Start with our documentation, deploy a free trial in your own infrastructure, or schedule a technical session with our engineers to review pipeline design, OCSF alignment, and Security Lake routing.

See VirtualMetric DataStream in action

Start for free to experience safer, smarter data routing with full visibility and control.